AI Support for NATO Operational Planning

BioNeuroCognitive Complex Reasoning for Traceable, Evidence-Based, and Explainable Military Planning

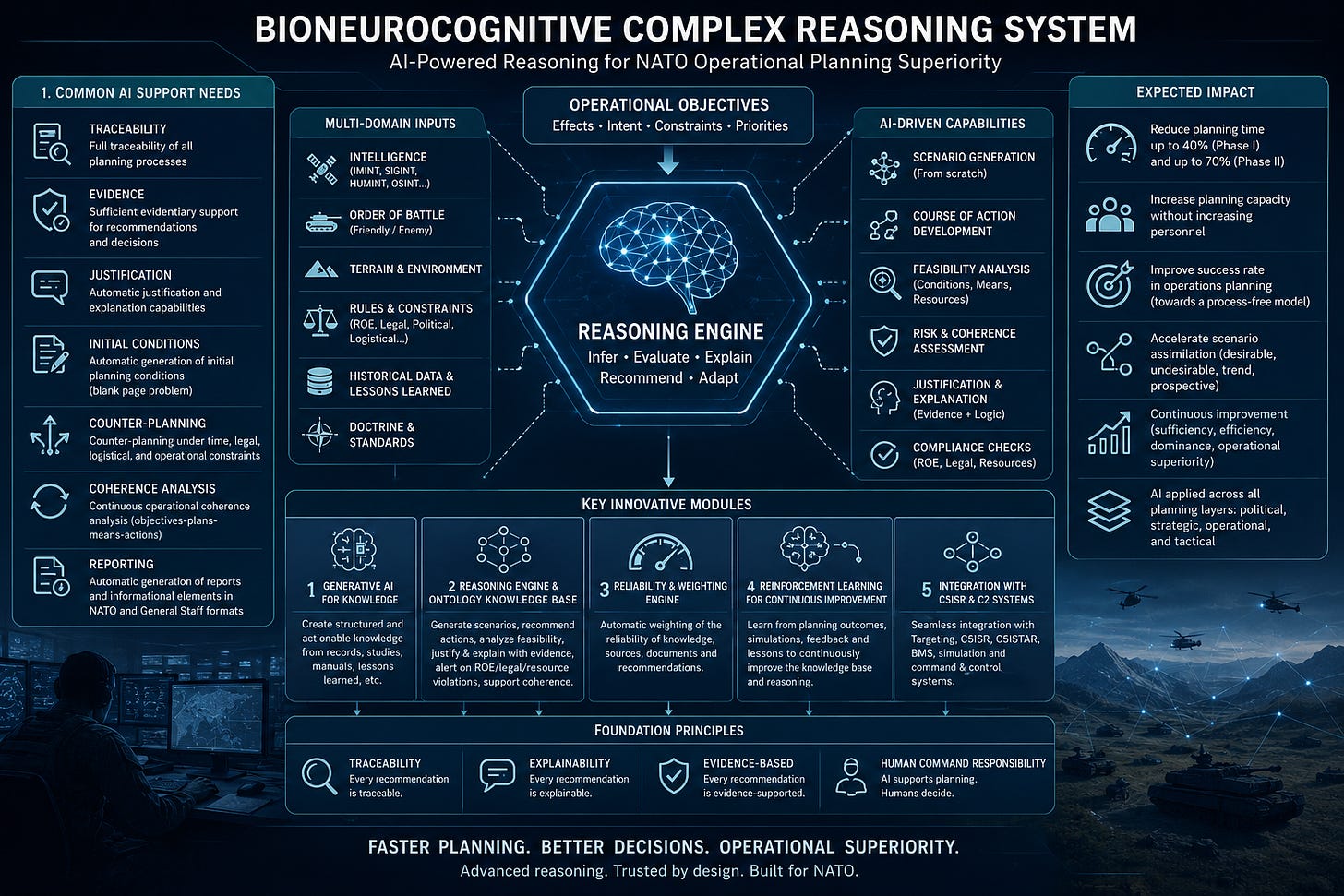

I am sharing here a brief extract from the design and deployment project of an advanced BioNeuroCognitive Complex Reasoning System intended to support the improvement of NATO military operational planning systems.

The objective is not merely to add artificial intelligence to existing planning workflows.

The objective is deeper.

It is to improve the quality, speed, traceability, coherence, evidentiary support, and explainability of military operational planning across all its phases, while maintaining human command responsibility, legal control, doctrinal alignment, and operational accountability.

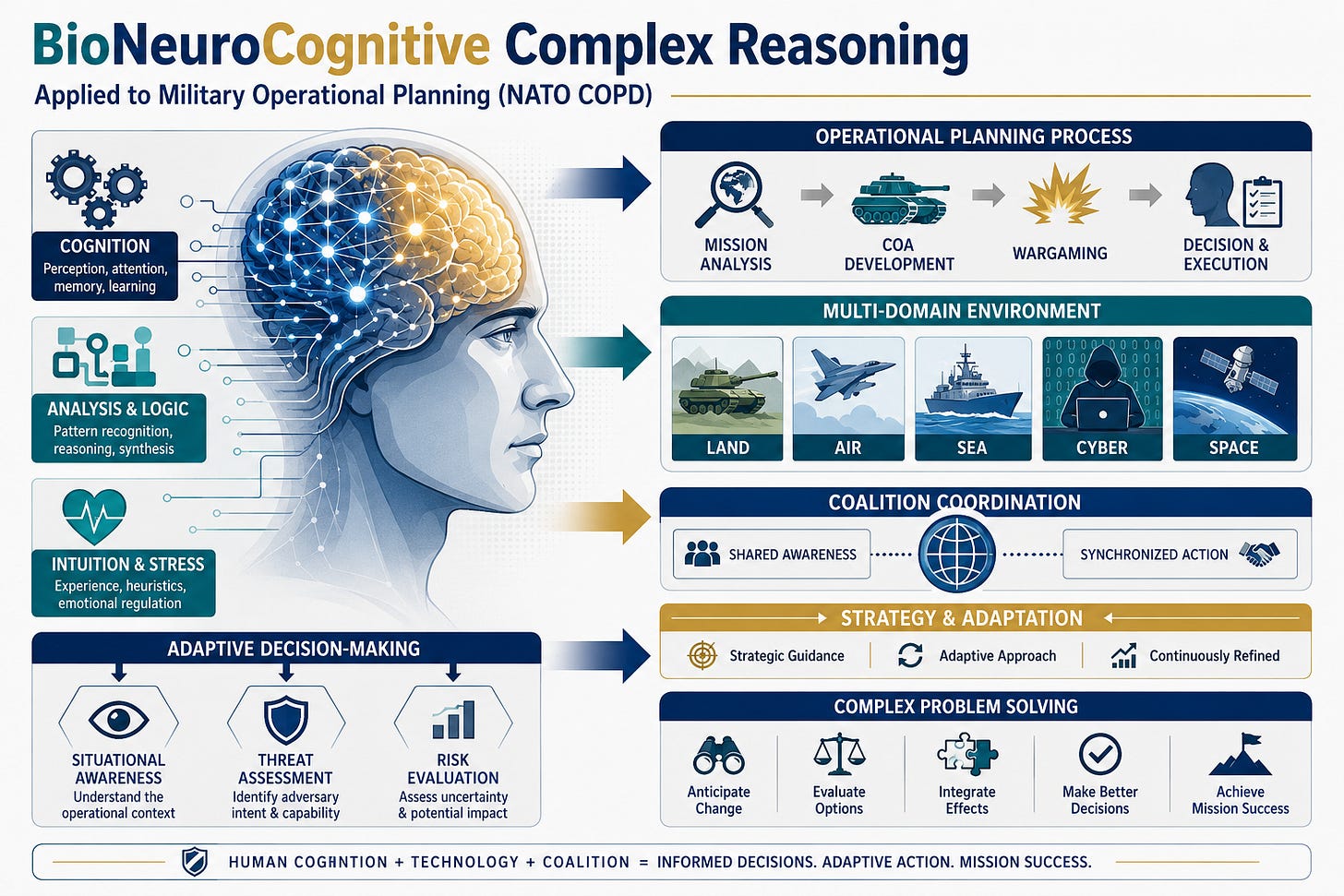

Modern military planning is increasingly exposed to extreme complexity: multi-domain operations, compressed decision windows, hybrid threats, legal constraints, logistical uncertainty, intelligence volatility, changing objectives, and the need to coordinate political, strategic, operational, and tactical layers.

In that context, AI should not function as a decorative assistant or as a generic document generator.

It must become a reasoning support architecture.

That is the core of the project we are developing at WarMind Labs.

The planning problem

Military operational planning is not a simple administrative sequence.

It is a structured reasoning process under uncertainty.

Each planning phase must transform objectives, constraints, intelligence, resources, risks, legal conditions, operational experience, and command intent into coherent courses of action.

This requires continuous reasoning about:

Objectives

Means

Constraints

Risks

Legal limits

Operational feasibility

Intelligence assumptions

Alternative scenarios

Command priorities

Resource availability

Time pressure

Expected effects

Possible second-order consequences

The difficulty is not only producing a plan.

The difficulty is producing a plan that is coherent, justified, adaptable, legally compliant, operationally feasible, and traceable.

This is where current planning systems often reveal structural limitations. They may store information, support workflows, produce documentation, or assist coordination, but they do not always provide the depth of reasoning needed to justify and continuously adapt decisions in complex operational environments.

Common AI support needs across NATO Operational Planning

Across all phases of NATO Operational Planning, we have identified a set of common AI support needs.

These needs are not peripheral. They are central to the modernization of military planning.

1. Full traceability of planning processes

Every relevant process included in each planning phase should be traceable.

This means understanding:

What was considered

Which assumptions were used

Which evidence was available

Which alternatives were evaluated

Which recommendations were generated

Which decisions were made

Which actors or systems contributed to each step

Why a specific planning path was selected

Traceability is essential for command responsibility, lessons learned, operational review, auditability, and continuous improvement.

2. Evidentiary support for recommendations and decisions

Operational recommendations and decisions should not appear as isolated outputs.

They must be supported by evidence.

A planning support system should be able to connect each recommendation to the intelligence, doctrine, operational constraints, prior experience, simulated scenarios, legal conditions, and reasoning sequences that justify it.

This is especially important in environments where decisions may have strategic, political, legal, and human consequences.

An AI system that cannot show the evidentiary basis of its recommendation is not sufficient for military planning.

3. Automatic justification and explanation

Military planners do not only need recommendations.

They need explanations.

A useful system must be able to explain:

Why a recommendation was produced

Which assumptions were used

Which evidence supports it

Which constraints limit it

Which risks it introduces

Which alternatives were considered

Why some alternatives were rejected

What would change the recommendation

This is one of the central differences between generic AI assistance and complex reasoning support.

Planning requires justified knowledge, not fluent output.

4. Automatic generation of initial planning conditions

One of the recurring problems in planning is the blank page problem.

When a new operational objective is defined, planners must generate the initial planning conditions, identify relevant precedents, establish assumptions, define constraints, and structure the first analytical space.

An advanced reasoning system should be able to generate initial planning conditions from:

Operational objectives

Prior experience

Real or simulated situations

Lessons learned

Strategic studies

Operational records

Doctrine

Manuals

Intelligence inputs

Legal and logistical constraints

This does not replace planners.

It accelerates the initial cognitive structuring of the planning problem.

5. Counter-planning under constraints

Operational planning rarely unfolds in ideal conditions.

Objectives change. Time compresses. Intelligence evolves. Resources become unavailable. Legal constraints become decisive. Logistics impose limits. The enemy adapts. Friendly and enemy orders of battle shift. Political priorities may expand or restrict what is operationally possible.

For that reason, the system must support counter-planning capabilities.

It should help planners reason against changes such as:

Time constraints

Legal constraints

Logistical constraints

Changes in friendly order of battle

Changes in enemy order of battle

Variation or expansion of objectives

New operational intelligence

Changes in resource availability

Changes in operational risk

Changes in rules of engagement

This capability is essential for adaptive planning.

A plan is not enough.

The planning system must help reason about how the plan breaks, adapts, or evolves.

6. Continuous operational coherence analysis

A military plan must remain coherent across objectives, plans, means, and actions.

This is a major reasoning challenge.

The system must continuously analyze whether:

The objectives remain aligned with the plan

The means are sufficient for the objectives

The proposed actions are consistent with the means

The operational design remains feasible

The selected COA remains coherent under changing conditions

The plan violates constraints, assumptions, or legal boundaries

The intended effects remain connected to the operational logic

This can be understood as a permanent objectives-plans-means-actions coherence analysis.

The goal is to detect incoherence before it becomes operational failure.

7. Automatic generation of reports in NATO and General Staff formats

Planning systems must also support the automatic generation of structured reports and other informational elements in NATO and General Staff formats.

This is not merely a documentation function.

If properly designed, automatic report generation becomes a way of preserving reasoning structure.

Reports should not simply summarize outputs. They should reflect evidence, assumptions, decisions, recommendations, uncertainties, alternatives, and operational logic.

The document becomes a reasoning artifact.

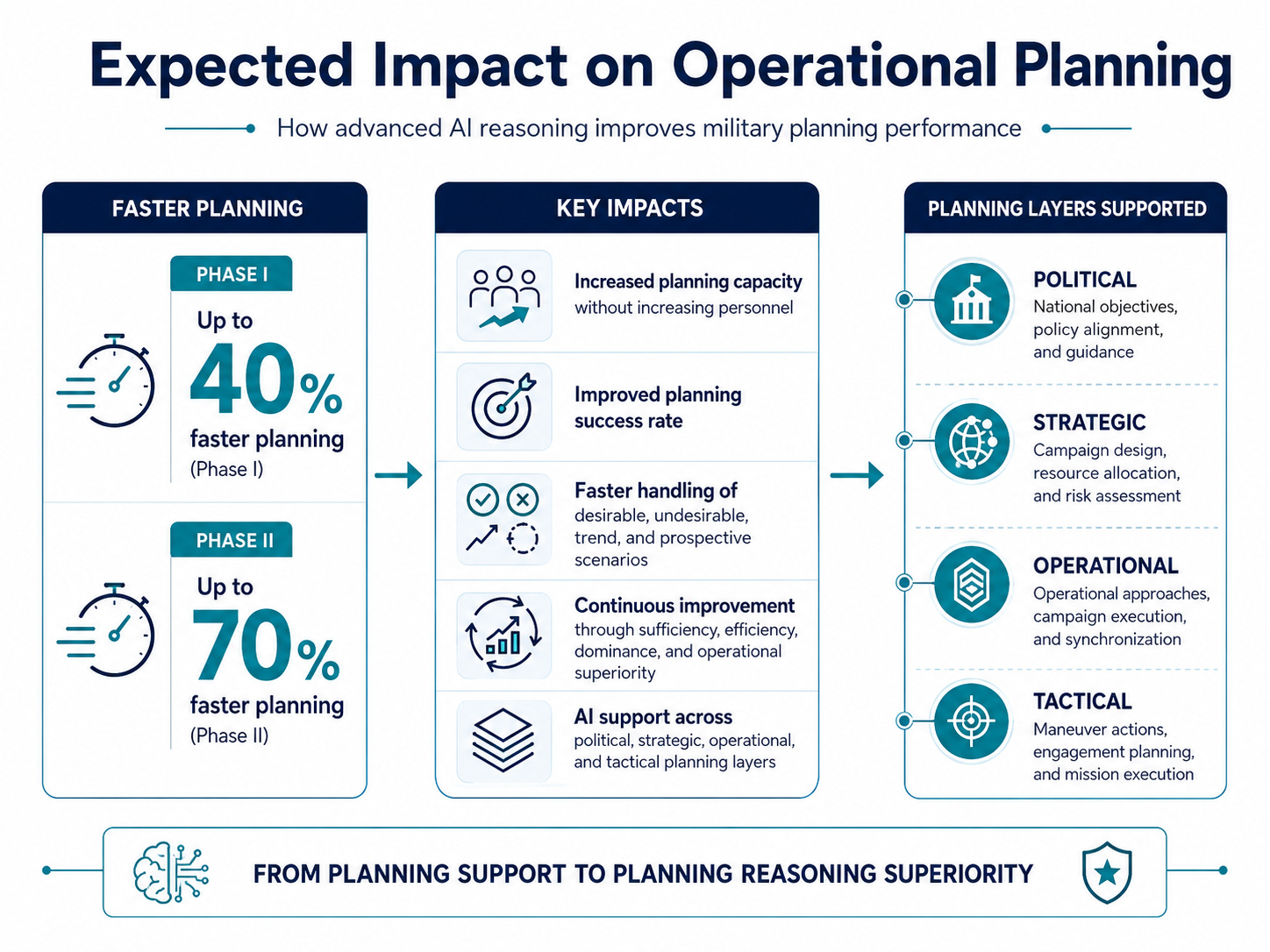

Expected impact

The expected impact of this type of system is significant.

In Phase I, we estimate that operational planning time may be reduced by up to 40%.

In Phase II, with more mature automation, integration, learning, and reasoning capabilities, planning time may be reduced by up to 70%.

These reductions are not based on the idea of replacing planners. They are based on reducing friction in the planning process, accelerating knowledge retrieval, improving initial structuring, automating justification support, and enabling faster scenario comparison.

The expected benefits include:

Optimization of operational planning processes

Significant reduction of planning time

Increased planning capacity without increasing personnel

Improved planning success rates

Movement toward a more process-free planning model

Faster assimilation of contrasting operational scenarios

Better use of desirable, undesirable, trend, and prospective scenarios

Continuous improvement based on sufficiency, efficiency, dominance, and operational superiority

Application of AI across all layers of military planning

This last point is especially important.

AI support should not be limited to technical or tactical layers. It should support military planning across:

Political planning

Strategic planning

Operational planning

Tactical planning

Each layer has different constraints, timescales, risks, and decision structures. But all of them require reasoning.

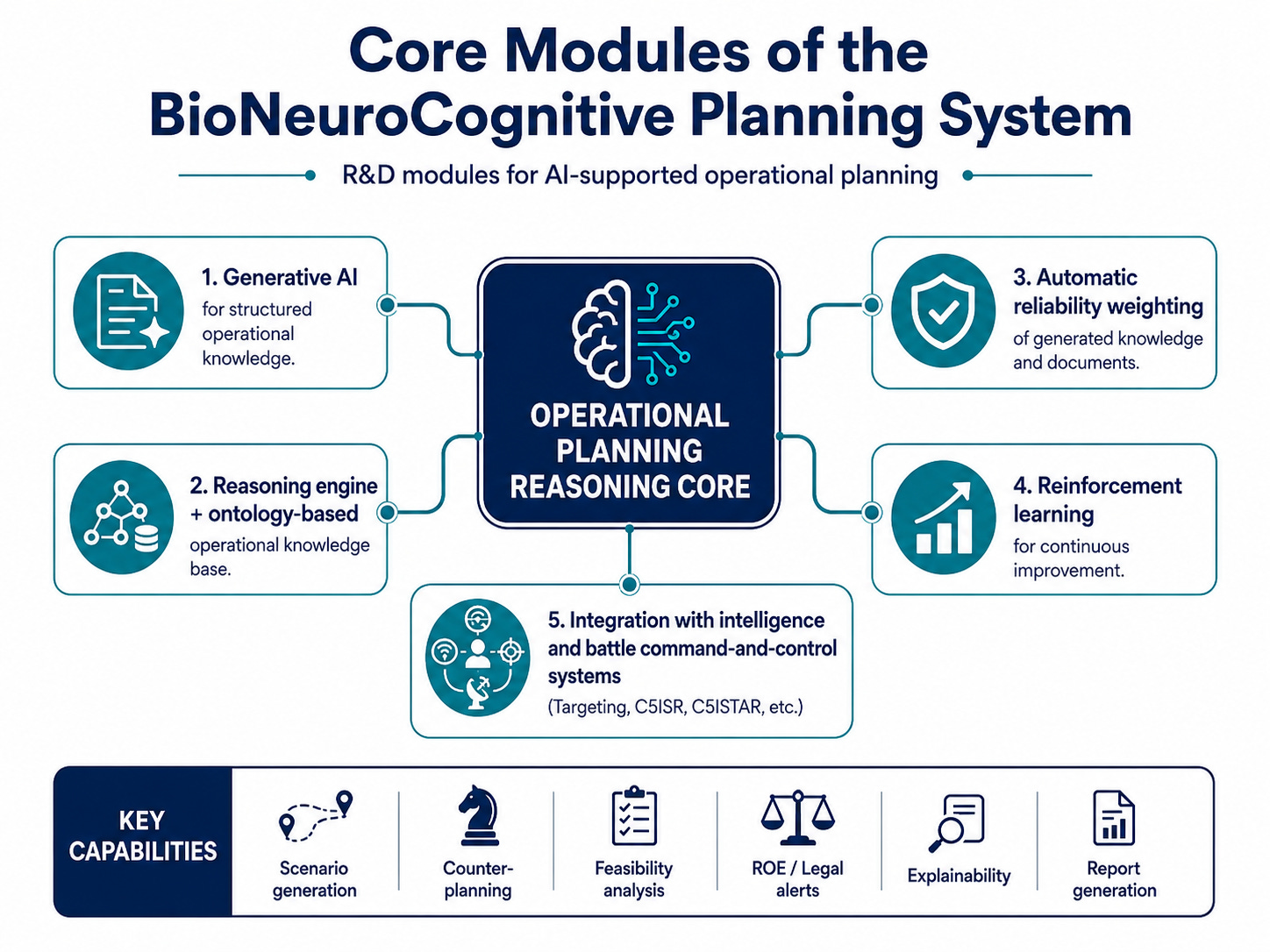

Key innovative modules of the system

The system we are developing through this R&D project includes several key innovative modules.

1. Generative AI for structured and actionable operational knowledge

The first module is a generative AI system for the automatic creation of structured and actionable knowledge from existing operational materials.

These may include:

Operational records

Strategic studies

Lessons learned

Operating manuals

Doctrine

Planning archives

Simulation outputs

Prior campaign analysis

General Staff documentation

The objective is not merely summarization.

The objective is to transform existing materials into structured operational knowledge that can be searched, reasoned over, reused, validated, and connected to planning processes.

2. Reasoning engine and operational ontology-based knowledge base

The second module is the core of the system.

It consists of a reasoning engine and an operational ontology-based knowledge base.

This module must be capable of:

Generating initial operational scenarios from scratch

Working from established objectives and operational constraints

Supporting counter-planning when objectives or constraints change

Recommending actions in the processes of each planning phase

Analyzing the feasibility of decisions, conditions, and actions

Justifying recommended actions with evidence and logic

Explaining the reasoning path behind each recommendation

Alerting to potential violations of Rules of Engagement

Alerting to potential violations of applicable legal frameworks

Alerting to resource limitations affecting a Course of Action

Supporting operational coherence analysis

Connecting objectives, means, plans, actions, and expected effects

This is the difference between an AI assistant and an operational reasoning system.

The reasoning engine does not merely produce text.

It supports structured military thought.

3. Automatic weighting of reliability

The third module concerns reliability.

Operational planning depends on knowledge of varying quality. Some knowledge is doctrinally established. Some is derived from intelligence. Some comes from simulations. Some comes from prior experience. Some comes from automatically generated documents. Some is uncertain, incomplete, or context-dependent.

The system must therefore support automatic weighting of:

Reliability of generated knowledge

Reliability of source materials

Confidence in automatically generated content

Evidentiary strength behind recommendations

Degree of uncertainty

Operational relevance

Timeliness

Consistency with doctrine and constraints

This is essential because AI-generated knowledge should not be treated as equally reliable by default.

A military planning system must know not only what it says, but how strongly it should be trusted.

4. Reinforcement Learning for continuous improvement

The fourth module is a Reinforcement Learning System for continuous improvement of the operational knowledge base.

The purpose is to allow the system to improve through use, feedback, simulation, evaluation, lessons learned, and operational review.

This does not mean uncontrolled autonomous learning.

In military planning, learning must be governed, validated, constrained, and auditable.

The objective is to improve the knowledge base and reasoning processes in relation to:

Sufficiency

Efficiency

Dominance

Operational superiority

Planning accuracy

Scenario handling

Resource coherence

Legal and doctrinal compliance

Recommendation quality

Explanatory quality

The system should learn from planning outcomes, simulations, red-team feedback, expert evaluation, and operational lessons.

5. Integration with intelligence and command-and-control systems

The fifth module is integration.

An operational planning reasoning system cannot remain isolated.

It must integrate with intelligence and battle command-and-control systems, including systems related to:

Targeting

C5ISR

C5ISTAR

Operational intelligence

Battle command and control

Simulation environments

Scenario generation

Lessons learned systems

Operational reporting systems

This integration is necessary because planning is not an isolated staff activity. It is connected to intelligence, command, control, communications, computers, cyber, surveillance, reconnaissance, targeting, and operational execution.

A planning system that cannot connect to this ecosystem will remain partial.

From process support to reasoning superiority

The central contribution of this project is the movement from planning process support to planning reasoning superiority.

Traditional systems tend to support workflows.

Advanced AI systems may generate text, summaries, templates, or recommendations.

But BioNeuroCognitive Complex Reasoning Systems should go further.

They should help planners reason across:

Objectives

Constraints

Courses of Action

Risks

Legal conditions

ROEs

Intelligence assumptions

Operational feasibility

Resource sufficiency

Enemy adaptation

Friendly capabilities

Scenario evolution

Expected effects

Alternative futures

Planning coherence

The objective is not to remove human planners from the process.

The objective is to make human planning faster, more coherent, more explainable, more evidence-based, and more adaptive.

A system for accountable military AI

Military AI must be accountable.

That requires more than human supervision in abstract terms.

It requires technical and methodological mechanisms that make recommendations traceable, explainable, auditable, and evidence-supported.

For that reason, the system must be designed around four principles:

Traceability

Every planning recommendation should be connected to evidence, assumptions, constraints, and reasoning paths.Explainability

The system should explain why it recommends a specific action or planning option.Evidentiary support

Recommendations must be grounded in doctrine, intelligence, prior experience, simulation, legal constraints, and operational logic.Human command responsibility

AI supports planning, but human authorities remain responsible for judgement, authorization, and command.

This is the appropriate role of AI in NATO operational planning.

Not autonomous command.

Not opaque automation.

Reasoning support for responsible military decision-making.

Toward faster and better NATO Operational Planning

The future of NATO Operational Planning will require systems capable of compressing planning time without degrading planning quality.

This is difficult.

Speed often reduces rigor.

Automation often reduces transparency.

Information abundance often increases cognitive overload.

AI-generated content often creates a false impression of coherence.

The challenge is therefore to design systems that increase speed while also increasing justification, traceability, reliability, and operational coherence.

That is precisely the role of advanced BioNeuroCognitive Complex Reasoning Systems.

They can help planners move faster because they structure the planning space.

They can help planners reason better because they connect objectives, constraints, evidence, and actions.

They can help planners adapt faster because they support counter-planning and scenario comparison.

They can help commanders trust outputs more appropriately because they provide justification, confidence, and evidentiary traceability.

Final note

The project we are developing at WarMind Labs aims to provide advanced AI support for the improvement of NATO military operational planning systems.

The expected result is a new class of planning support architecture: one that combines generative AI, ontology-based operational knowledge, complex reasoning engines, evidentiary weighting, reinforcement learning, and integration with intelligence and command-and-control systems.

The objective is not more automation for its own sake.

The objective is better planning.

Faster planning.

More explainable planning.

More evidence-based planning.

More adaptive planning.

And ultimately, stronger operational superiority.

Not merely AI-generated plans.

Traceable operational reasoning.

Not more documents.

Better planning intelligence.

Not faster bureaucracy.

Superior military planning support.