Complex Inference Networks of the Real World

Why real-world intelligence requires more than generative AI

Real-world problems are rarely clean.

They do not arrive as complete datasets, well-formed prompts, or perfectly structured causal diagrams. They appear as fragments, weak signals, contradictory evidence, implicit expert knowledge, missing context, ambiguous intentions, and evolving situations.

This is precisely where many current AI models encounter their deepest limitations.

Generative AI has shown extraordinary capabilities in language, synthesis, pattern completion, coding, search augmentation, and the production of plausible explanations. But many high-stakes problems require something different from fluent generation.

They require complex reasoning over incomplete evidence.

They require the ability to justify, explain, weigh, infer, compare, and update conclusions under uncertainty.

They require reasoning structures capable of operating in the real world, where information is incomplete, credibility is uneven, actors conceal intentions, causal chains are uncertain, and the most important knowledge is often implicit rather than explicit.

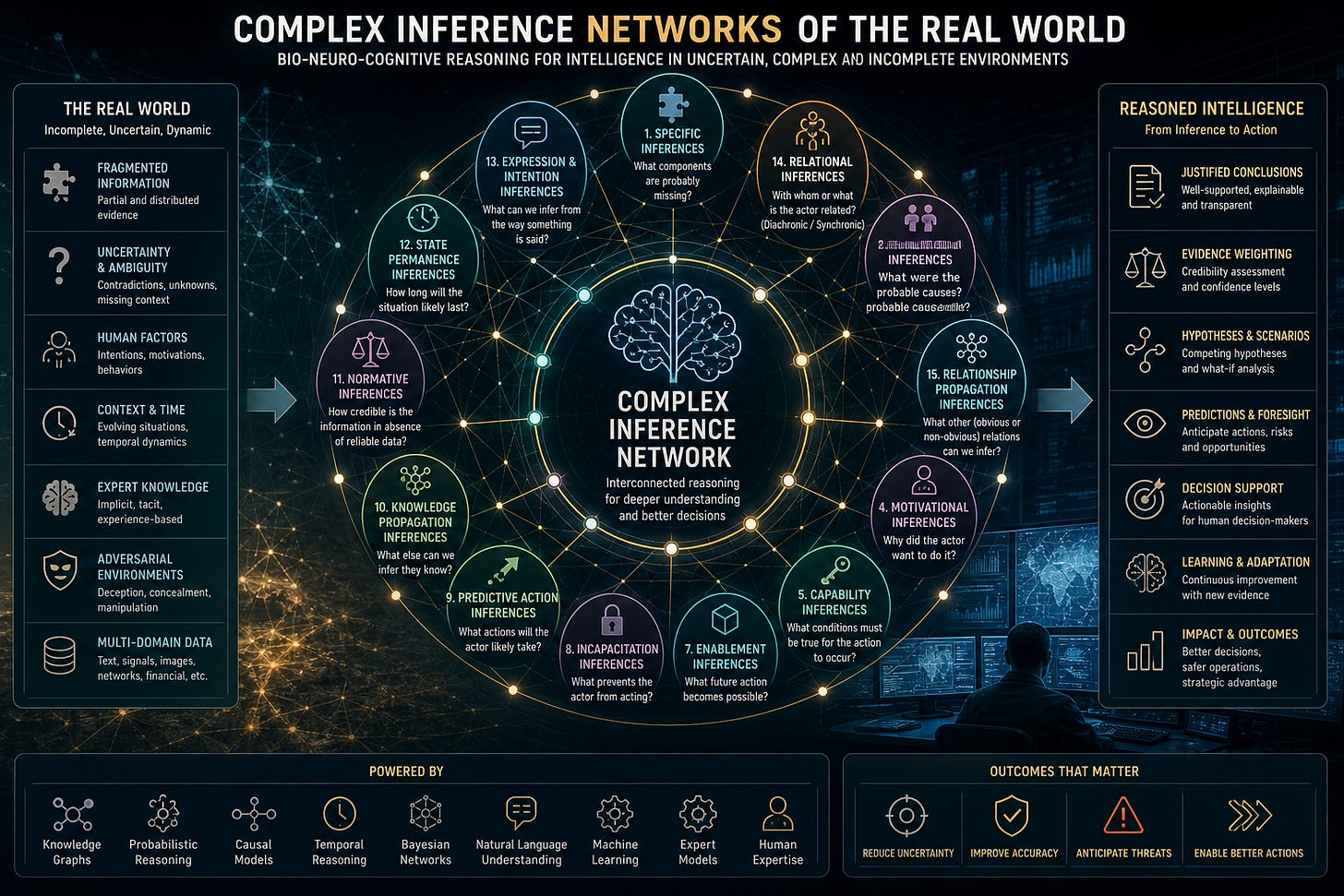

This is the space we call Complex Inference Networks of the Real World.

At Binomial Consulting & Design S.L. and WarMind Labs, we are working on the modelling of these critical inference structures for real-world intelligence problems, especially in criminal, military, terrorist, and corporate intelligence contexts.

The accompanying conceptual image frames these structures as key BioNeuroCognitive complex reasoning capabilities for military, criminal, terrorist, and corporate intelligence, organized around a central network of real-world inference types.

The problem with real-world evidence

The central difficulty is not simply lack of data.

The real difficulty is that evidence in the real world is usually:

Incomplete

Ambiguous

Distributed

Contradictory

Temporally unstable

Context-dependent

Uneven in credibility

Mixed with noise or deception

Dependent on implicit expert knowledge

In many domains, especially intelligence and investigation, the most important question is not “What does the data say?”

The better question is: What can be reasonably inferred from the evidence we have, the evidence we lack, and the context in which both appear?

That distinction matters.

A system that summarizes information is not necessarily reasoning. A system that produces a plausible answer is not necessarily weighing evidence. A system that explains fluently is not necessarily justified.

Real-world reasoning requires a different architecture.

It must be able to ask what is missing, what is probable, what is contradictory, what is normal, what is abnormal, what is intended, what is implied, what is enabled, what is prevented, and what may happen next.

This is not a marginal capability.

It is the core of intelligence.

Explicit knowledge is not enough

Many AI systems operate primarily through projections of explicit knowledge. They rely on massive learned representations, statistical associations, pattern completion, and broad inference mechanisms that can be powerful but often remain shallow when the problem demands evidential discipline.

This creates several difficulties in high-stakes reasoning:

Weak justification of inferential steps

Limited explanation of why one hypothesis is stronger than another

Difficulty weighing the credibility of evidence

Difficulty handling implicit expert knowledge

Difficulty reasoning over missing information

Difficulty distinguishing plausibility from probability

Difficulty maintaining precision when the situation is incomplete or adversarial

Generative AI can produce a coherent answer.

But in real-world intelligence, coherence is not enough.

The system must be able to tell us why a conclusion deserves to be believed, how strongly it should be believed, which evidence supports it, which evidence weakens it, which assumptions are being made, and what additional information would change the conclusion.

That is the difference between generation and reasoning.

What are Complex Inference Networks of the Real World?

A Complex Inference Network of the Real World is a structured set of reasoning processes designed to operate over incomplete, uncertain, and context-rich evidence.

It is not a single inference rule.

It is a network of interdependent inferential capabilities.

Each type of inference answers a different kind of question. Some infer causes. Others infer consequences. Some infer intentions. Others infer capabilities, relationships, missing components, normality, duration, or implied knowledge.

Together, they form a reasoning architecture capable of supporting human and artificial intelligence in complex domains.

The goal is not to automate judgement blindly.

The goal is to create reasoning structures that can help analysts, investigators, commanders, executives, and decision-makers reason more rigorously under uncertainty.

Specific inferences

Specific inferences address one of the most common problems in real-world analysis: incompleteness.

When a conceptual group is incomplete, the reasoning system must ask:

What conceptual components are probably missing?

For example, if an investigation identifies part of a criminal network, part of a financial structure, or part of a military operation, the question becomes whether other missing components are likely to exist.

Specific inference is not simple guessing.

It is a disciplined attempt to infer missing elements from known patterns, domain knowledge, previous cases, structural expectations, and contextual constraints.

This matters because many real-world systems are only partially visible.

The visible fragment is rarely the whole structure.

Causal inferences

Causal inferences ask:

What were the probable causes of an action, event, or state?

This is one of the most important forms of reasoning in intelligence.

An event has occurred. But why?

Was it accidental or intentional?

Was it caused by operational failure, strategic design, financial pressure, human error, deception, coercion, opportunity, ideology, organizational weakness, or external manipulation?

Causal inference is difficult because real-world causes are rarely singular. Most significant events emerge from multiple interacting conditions.

A mature reasoning system must therefore support multi-causal analysis, competing causal hypotheses, and continuous revision as new evidence appears.

Resultative inferences

Resultative inferences ask:

What are the probable results or effects of an action or state?

If an actor moves, what may follow?

If a company changes strategy, what effects may appear in the market?

If a criminal group loses a logistics route, how might it adapt?

If a military unit changes posture, what operational consequences may emerge?

If a terrorist cell acquires a certain capability, what risk trajectory follows?

Resultative inference is essential because intelligence is not only about understanding what has happened. It is about anticipating what may happen next.

A reasoning system must therefore connect actions and states with likely effects in the world.

Motivational inferences

Motivational inferences ask:

Why did an actor want to perform an action? What were the actor’s intentions?

This is especially important in adversarial domains.

Actors do not merely act. They act for reasons, even when those reasons are hidden, irrational, ideological, opportunistic, strategic, emotional, or mixed.

Understanding motivation helps distinguish between:

Opportunistic action

Strategic action

Coerced action

Symbolic action

Preparatory action

Retaliatory action

Deceptive action

Escalatory action

Without motivational inference, intelligence remains superficial. It describes behavior but does not understand intent.

Capability inferences

Capability inferences ask:

What states of the world must be true, or must have been true, for an action to take place?

This is a powerful investigative question.

If an actor performed a certain action, what capabilities did they need?

What access did they require?

What knowledge did they possess?

What resources must have been available?

What permissions, tools, networks, routes, platforms, or collaborators were necessary?

Capability inference helps reverse-engineer hidden structures from observed actions.

If the action happened, the conditions that enabled it must be investigated.

Functional inferences

Functional inferences ask:

Why do people want or possess certain physical or abstract objects?

This may sound simple, but it is strategically important.

Objects have functions.

A device, document, vehicle, account, credential, weapon, software tool, property, company, domain name, communication channel, legal structure, or financial instrument may exist because it enables something.

Functional inference asks what that thing is for.

In intelligence work, possession is rarely neutral. What an actor possesses may reveal what the actor can do, intends to do, or is preparing to do.

Enablement inferences

Enablement inferences ask:

If a person wants a particular state of the world to exist, is it because that state will enable a predictable action?

This type of inference connects desire, condition, and future action.

An actor may seek access to a building, control of a company, proximity to a person, possession of a credential, influence over a channel, or entry into a territory because that condition enables a later move.

Enablement inference is therefore anticipatory.

It helps analysts understand why certain preparatory actions matter before the final action becomes visible.

Incapacitation inferences

Incapacitation inferences ask:

If a person cannot perform an action they want to perform, can this be explained by a missing prerequisite state of the world?

In other words, what is preventing the actor?

A blocked account, lack of access, missing expertise, insufficient logistics, social resistance, legal pressure, operational surveillance, loss of trust, lack of funding, or technical failure may explain why an intended action does not occur.

This is important because inactivity can be informative.

Sometimes an actor does not act because they changed intention.

Sometimes they do not act because they cannot.

The difference matters.

Predictive action inferences

Predictive action inferences ask:

Knowing the needs and desires of a person or organization, what actions are they likely to perform to achieve those desires?

This is central to strategic intelligence.

If we understand goals, constraints, capabilities, motivations, and available means, we can infer probable courses of action.

This does not mean deterministic prediction. Human and organizational behavior remains uncertain.

But predictive action inference can generate ranked hypotheses about likely future behavior, especially when combined with evidence, context, and domain expertise.

The objective is not prophecy.

The objective is anticipatory reasoning.

Knowledge propagation inferences

Knowledge propagation inferences ask:

If a person knows certain things, what else can we predict that they also know?

Knowledge is rarely isolated.

If an actor knows a password, perhaps they know the system architecture. If they know a meeting point, perhaps they know the network. If they know a financial route, perhaps they know the intermediaries. If they know a tactical procedure, perhaps they have received specific training.

This type of inference is crucial in criminal, terrorist, military, and corporate intelligence.

What an actor knows can reveal what they have accessed, who they are connected to, what role they play, and what they may do next.

Normative inferences

Normative inferences ask:

In relation to what is normal in the real world, how strongly should we believe a report in the absence of reliable or verifiable data?

This is one of the most subtle forms of reasoning.

Sometimes the analyst must evaluate a claim before full verification is possible.

In those cases, the system must compare the claim with what is normal, expected, typical, atypical, rare, impossible, or suspicious in a given context.

Normative inference does not replace verification.

It supports provisional judgement when verification is incomplete.

It helps determine whether a claim deserves attention, skepticism, escalation, or dismissal.

State permanence inferences

State permanence inferences ask:

How long can a situation be expected to last?

Some states are brief.

Some are stable.

Some decay quickly.

Some persist unless actively disrupted.

Some appear temporary but reveal structural change.

In intelligence analysis, estimating the duration of a state is essential. A vulnerability, alliance, conflict, opportunity, risk condition, operational window, market situation, or social tension all have temporal dynamics.

A reasoning system must therefore ask not only what is true, but how long it is likely to remain true.

Expression and intention inferences

Expression and intention inferences ask:

What can be inferred from the way something is said?

The form of expression matters.

Tone, ambiguity, omission, emphasis, timing, channel, audience, rhetorical structure, emotional intensity, and linguistic framing can all carry inferential value.

In intelligence contexts, speech and communication are not merely containers of content. They are actions in themselves.

The way something is said may indicate fear, deception, confidence, coercion, hierarchy, intent, preparation, signaling, or strategic ambiguity.

This type of inference is especially relevant in human intelligence, criminal communications, extremist discourse, corporate negotiation, and strategic messaging.

Relational inferences

Relational inferences ask:

With whom, or with what, is an actor related?

These inferences may be diachronic or synchronic.

Diachronic relational inference looks across time. It asks who or what an actor has been connected to historically.

Synchronic relational inference looks within the period of an action or event. It asks who or what an actor was connected to during the development of that action or event.

This distinction matters because relationships change.

A past relationship may explain background, training, trust, access, ideology, or opportunity.

A present relationship may explain action, coordination, concealment, support, or operational capability.

Both are necessary.

Propagation of obvious and non-obvious relations

Relationship propagation inferences ask:

If we know that an actor is related to certain entities, what other entities can we reasonably infer are also related to that actor?

Some relationships are obvious.

Others are hidden, indirect, mediated, or deliberately concealed.

A person may be connected to a company through a relative, to a criminal network through a logistics provider, to a political actor through an intermediary, or to a digital infrastructure through a service account.

This is where complex inference networks become especially valuable.

They allow analysts to move beyond direct links and investigate second-order, third-order, and non-obvious relations.

The objective is not guilt by association.

The objective is disciplined relational reasoning.

Why these inferences must be networked

Each inference type is useful by itself.

But the real value emerges when they are connected.

A motivational inference may depend on a relational inference.

A causal inference may require a capability inference.

A predictive action inference may require knowledge propagation.

A normative inference may affect the credibility of a causal hypothesis.

A state permanence inference may change operational prioritization.

An enablement inference may reveal why an apparently minor action matters.

This is why we speak of Complex Inference Networks, not isolated inference modules.

Real-world reasoning is networked because the world itself is networked.

Actors, intentions, causes, capabilities, effects, relationships, knowledge, norms, and time all interact.

The architecture must reflect that.

Applications in intelligence domains

At Binomial Consulting & Design S.L. and WarMind Labs, we are modelling these inference structures for several high-complexity domains.

These include:

Criminal intelligence

Military intelligence

Counterterrorism intelligence

Corporate intelligence

The specific domain changes, but the reasoning problem remains similar.

In all these areas, analysts must work with incomplete information, hidden actors, uncertain intentions, complex causal chains, adversarial behavior, and high operational consequences.

A simple generative system is not enough.

A dashboard is not enough.

A database is not enough.

A search engine is not enough.

The critical requirement is a reasoning architecture capable of working with evidence, uncertainty, expertise, and action.

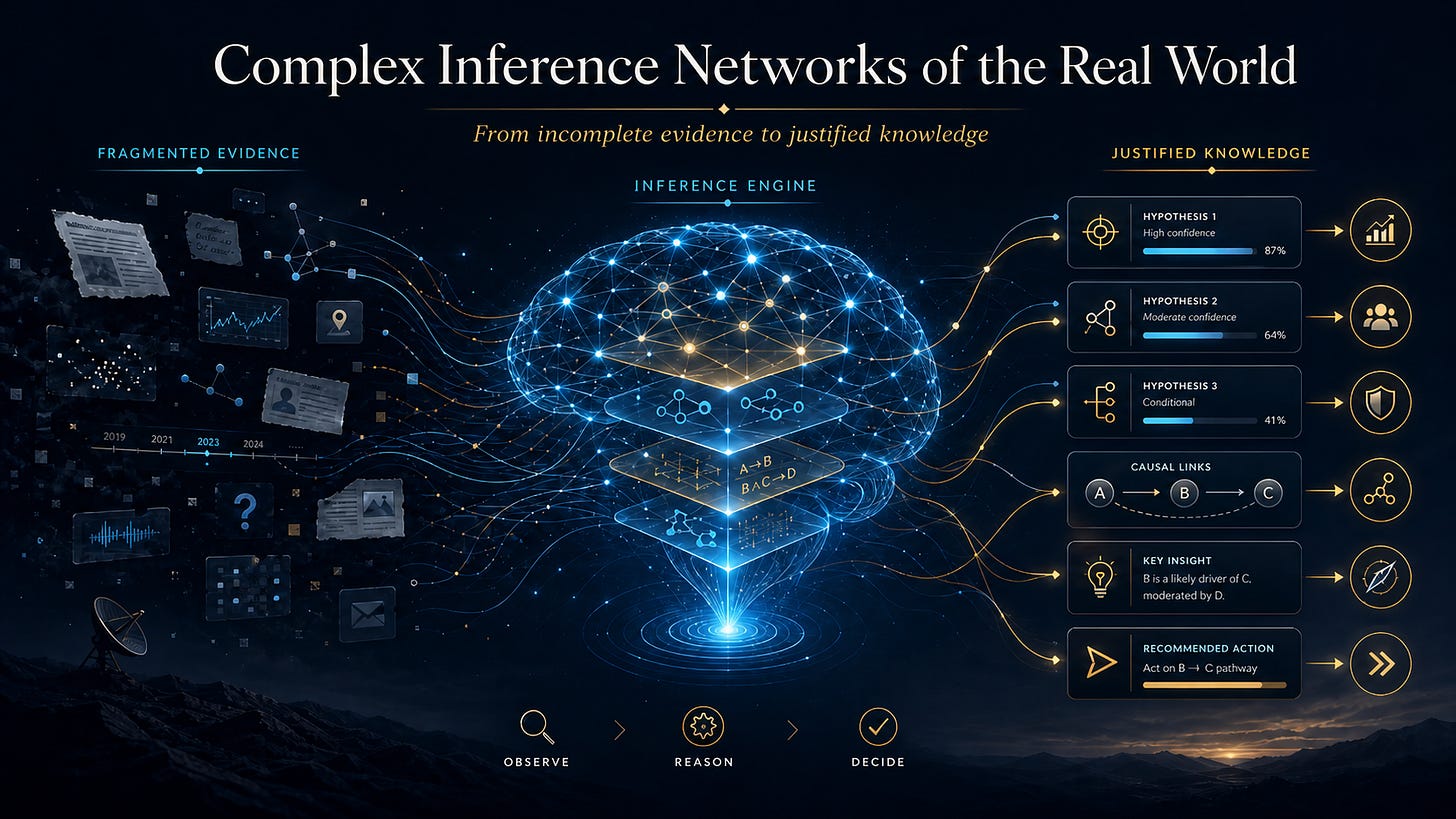

From plausible answers to justified knowledge

The future of AI in complex domains will not be defined only by systems that can generate answers.

It will be defined by systems that can justify knowledge.

This means systems able to show:

Which evidence supports a conclusion

Which evidence contradicts it

Which assumptions are being made

Which inference path was followed

How credible the reasoning process is

What degree of confidence is justified

What information is missing

What would change the conclusion

What action should or should not follow

That is the standard required for intelligence, investigation, strategy, and operations.

A model that only produces an answer is not enough.

A real-world reasoning system must produce an answer, a path, a justification, a confidence structure, and a way to revise itself.

Toward BioNeuroCognitive complex reasoning

The work we are developing is part of a broader BioNeuroCognitive approach to AI.

The objective is not to imitate human cognition superficially, nor to add symbolic labels to generative models as an afterthought.

The objective is to model the reasoning structures required by real-world intelligence.

This means integrating:

Evidence-based reasoning

Causal reasoning

Motivational reasoning

Relational reasoning

Temporal reasoning

Normative reasoning

Predictive reasoning

Knowledge propagation

Hypothesis generation and revision

Human expert judgement

Artificial inference support

The result should not be an AI that merely speaks about the world.

It should be an AI architecture that reasons within the world.

The real frontier

The real frontier of AI is not only generation.

It is not only multimodality.

It is not only agentic execution.

It is not only tool use.

The real frontier is the ability to reason over the uncertain, incomplete, adversarial, implicit, and evolving structures of the real world.

That is where criminal, military, terrorist, and corporate intelligence problems live.

That is where the limitations of current AI become visible.

And that is where Complex Inference Networks of the Real World become necessary.

The next stage of AI will not be defined by systems that produce more text.

It will be defined by systems that produce better justified knowledge.

Not more plausibility.

More reasoning.

Not more fluent answers.

Stronger inference.

Not more information.

Better judgement.